Lede

Everyone is arguing about who wins the AGI race while standing beside an empty pool, dressed for a dive, applauding the tiles.

Hermit Off Script

The AGI race has become the new tech horoscope: Google moon rising, OpenAI in retrograde, Anthropic entering the seventh house of benchmark superiority. Lately, every podcast, YouTube panel and LinkedIn prophet with a ring light seems to be placing bets on which lab reaches AGI first, as if artificial general intelligence is a Premier League table and not an undefined cliff with a marketing department. I do not think the winner will be decided by whatever model looks best this quarter. It will probably come from a breakthrough, the kind of jump that makes the previous scoreboard look like monks arguing over candle brightness five minutes before electricity arrives. ChatGPT did that in November 2022. GPT-4o had its own moment in May 2024. Then, as usual, the miracle became furniture. Give it a year or two and suddenly every serious lab has something that can reason, code, summarise, hallucinate politely and apologise like a Victorian butler caught stealing biscuits. The market then pretends there is one winner, but users do not live inside benchmark charts. A coder may swear by Claude. Another may get better results from Codex. A writer may prefer one voice, a researcher another, a business user the one that does not turn a simple task into a TED Talk wearing a lab coat. There is no clean winner in the real world because the real world is not a leaderboard. It is messy, local, need-based, and allergic to fanboy certainty. The funniest part is that the lab everyone ignores may be the one sharpening the actual knife in the dark. The frontier was once LLMs. Then reasoning models arrived. Then agents. Then multimodal systems. Each time, the old crown looked expensive and slightly silly. The influencers want a horse race. The real story may be a trapdoor.

What does not make sense

- Calling it an AGI race while the major labs do not even use one clean public definition of AGI.

- Treating benchmark leadership as destiny, when the same model can feel brilliant in coding and useless in daily workflow.

- Pretending one company has already won because it leads this month, as if the AI frontier has not been humiliating yesterday’s champions since 2022.

- Acting as if Google, OpenAI and Anthropic are the only possible players, while cheaper or open models keep appearing from places the pundits did not monetise yet.

- Confusing “more intelligent on a chart” with “more useful in my actual life”, which is how software becomes religion with a monthly subscription.

Sense check / The numbers

- ChatGPT was introduced by OpenAI on November 30, 2022, and Reuters reported that it was estimated to have reached 100 million monthly active users in January 2023, roughly 2 months after launch. That was the shockwave, not a tidy forecast from influencer tea leaves. [OpenAI, Reuters]

- GPT-4o was announced on May 13, 2024, with OpenAI describing it as a flagship model able to reason across audio, vision and text in real time. That mattered because the jump was experiential, not just another spreadsheet flex. [OpenAI]

- Google announced Gemini 2.5 Pro on March 25, 2025, and later listed Gemini 2.5 Pro as generally available on Vertex AI with a June 17, 2025 release date. The point is simple: the labs keep catching up, then renaming the hill. [Google, Google Cloud]

- Anthropic announced Claude 4 on May 22, 2025, calling Claude Opus 4 a leading coding model, then released Claude Opus 4.1 on August 5, 2025 for agentic tasks, real-world coding and reasoning. That supports the user-experience point: one lab may feel best in one lane, not all lanes. [Anthropic]

- Stanford’s 2025 AI Index said the performance gap between the top and 10th-ranked models fell from 11.9 per cent to 5.4 per cent in a year, while the top 2 were separated by just 0.7 per cent. Translation: the frontier is tightening, which makes fanboy certainty look like a spreadsheet wearing aftershave. [Stanford HAI]

- METR found in a 2025 controlled study that experienced open-source developers took 19 per cent longer when using early-2025 AI tools, although later work showed mixed evidence of speedups. That is the real-world poison pill: capability is not the same as productivity. [METR]

The sketch

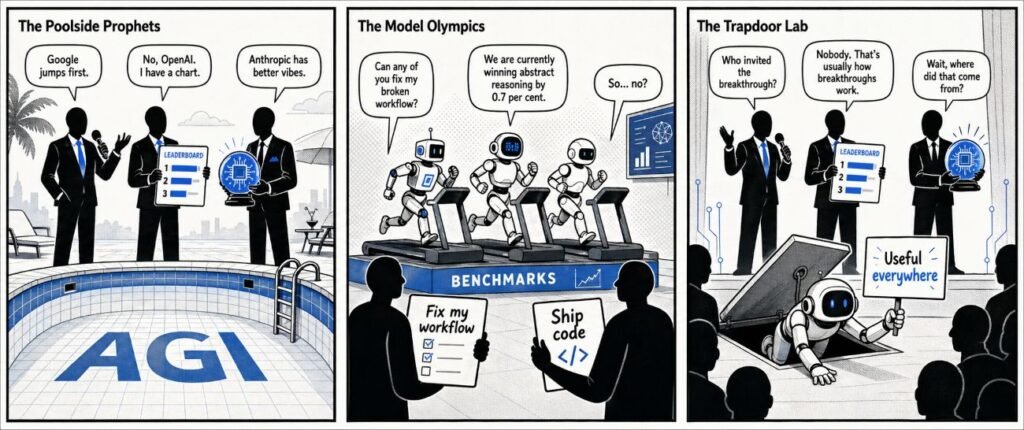

Scene 1: The Poolside Prophets

Three tech influencers stand beside an empty swimming pool marked “AGI”. One holds a microphone, one holds a leaderboard, one holds a crystal ball shaped like a GPU.

Bubble 1: “Google jumps first.”

Bubble 2: “No, OpenAI. I have a chart.”

Bubble 3: “Anthropic has better vibes.”

Scene 2: The Model Olympics

Three lab mascots sprint on treadmills labelled “benchmarks”, while ordinary users stand nearby holding actual tasks.

Bubble 1: “Can any of you fix my broken workflow?”

Bubble 2: “We are currently winning abstract reasoning by 0.7 per cent.”

Bubble 3: “So… no?”

Scene 3: The Trapdoor Lab

A small unlabelled door opens under the stage. A quiet model crawls out holding a sign: “Useful everywhere.” The influencers do not notice because they are arguing about thumbnails.

Bubble 1: “Who invited the breakthrough?”

Bubble 2: “Nobody. That is usually how breakthroughs work.”

What to watch, not the show

- Compute access: who can afford the training, inference and energy bill when the hype invoice arrives.

- Product fit: which assistant people actually keep using after the demo glow dies.

- Coding reliability: whether agents can complete messy work without turning the repo into soup.

- Regulation and safety: how far labs can push before governments, lawsuits or catastrophic risk frameworks slow the music.

- Distribution: Google has Android, Search, YouTube and Workspace; OpenAI has ChatGPT and developer gravity; Anthropic has strong coding credibility and enterprise trust.

- Open and cheaper models: the serious threat is not always the biggest lab, but the model that becomes good enough and cheap enough.

- Benchmarks ageing fast: every new test becomes a training target, then a marketing badge, then wallpaper.

The Hermit take

The AGI race is not a horse race.

It is a foggy lake, and everyone is selling binoculars.

Keep or toss

Keep.

Keep the curiosity, the testing, and the daily comparison between models. Toss the prophecy market, the lab tribalism, and the fantasy that superintelligence will politely arrive through the front door wearing a badge.

Sources

- OpenAI, Introducing ChatGPT: https://openai.com/index/chatgpt/

- Reuters, ChatGPT sets record for fastest-growing user base: https://www.reuters.com/technology/chatgpt-sets-record-fastest-growing-user-base-analyst-note-2023-02-01/

- OpenAI, Hello GPT-4o: https://openai.com/index/hello-gpt-4o/

- Google, Gemini 2.5: Our most intelligent AI model: https://blog.google/innovation-and-ai/models-and-research/google-deepmind/gemini-model-thinking-updates-march-2025/

- Google Cloud, Gemini 2.5 Pro on Vertex AI: https://docs.cloud.google.com/vertex-ai/generative-ai/docs/models/gemini/2-5-pro

- Anthropic, Introducing Claude 4: https://www.anthropic.com/news/claude-4

- Anthropic, Claude Opus 4.1: https://www.anthropic.com/news/claude-opus-4-1

- Stanford HAI, The 2025 AI Index Report: https://hai.stanford.edu/ai-index/2025-ai-index-report

- METR, Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity: https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

- METR, Developer Productivity Experiment Update: https://metr.org/blog/2026–02-24-uplift-update/

Leave a Reply