Lede

AI adoption is not slow because users fear the future, but because the future keeps forgetting the status bar.

Words used

- AGI: Artificial general intelligence, usually meaning a system that can perform most human-level intellectual tasks across domains.

- GenAI: Generative AI, such as chatbots, image tools and code assistants.

- Proof of concept: A test version built to see if a system is useful before putting it into real production.

- Agentic AI: AI systems that are meant to take actions across tools, workflows or software with less human prompting.

Hermit Off Script

Why many users still don’t use AI for complex tasks is not really a mystery. Companies keep asking why adoption is slower than expected, while they keep showing benchmark fireworks and forgetting that most users live in the kitchen, not in the laboratory. The answer is simple: the models still fail too often at small, obvious tasks. Sometimes they look brilliant on complex reasoning and then fall over a basic instruction like a genius slipping on a banana skin in front of the whole office. For many people, simple tasks are exactly where trust is born. If a model fails once on something easy, the user does not think, “Wonderful, let me now give it my production workflow, private data and Excel macro that can break half my week.” They close the tab, try Claude, try Gemini, try anything else, and suddenly AI use becomes roulette with better fonts. In my experience, ChatGPT is still the strongest general tool, especially for Excel macros and code, and it has improved a lot with newer models. But even extended reasoning can miss the tiny things that matter, such as showing progress in the status bar before actions run, asking before changing risky features, or checking the limits of Excel instead of pretending the spreadsheet is a small obedient moon. That is where the AGI circus becomes funny. We are told the machines are coming for all knowledge work, but they still need a human beside them saying, “No, mate, don’t delete the worksheet.” Complex tasks need domain knowledge. If the user does not understand the work, they cannot verify the answer, and that means bad code, broken systems, security holes and future pain wearing a shiny productivity badge. Maybe by May 2027 it will be better. For now, the model can write poetry about automation, then forget to lock the door.

What does not make sense

- Companies ask why users don’t trust AI in production, while shipping tools that still need a human babysitter for basic workflow safety.

- Benchmarks reward impressive reasoning, but users remember the one small failure that wasted an hour.

- The hype says “agentic”, while the reality often says “please check every line before this thing touches your files”.

- A model can explain software architecture, then miss the boring practical step that keeps the spreadsheet usable.

- Users with no task knowledge are pushed towards advanced work, then blamed when they cannot detect the model’s confident mistakes.

- The market treats switching between ChatGPT, Claude and Gemini as consumer choice, when for many users it feels like pulling a lever on a casino machine.

- Businesses want automation, but many still have not priced the human verification needed after the AI has finished sounding clever.

Sense check / The numbers

- Stanford’s 2025 AI Index says 78 per cent of organisations reported using AI in 2024, up from 55 per cent in 2023, while global private investment in generative AI reached $33.9 billion. [Stanford AI Index]

- Gartner reported in January 2026 that at least 50 per cent of GenAI projects were abandoned after proof of concept by the end of 2025, citing poor data quality, risk controls, costs or unclear business value. [Gartner]

- Stack Overflow’s 2025 Developer Survey found that 84 per cent of respondents were using or planning to use AI tools in development, but 46 per cent actively distrusted AI accuracy compared with 33 per cent who trusted it. [Stack Overflow]

- The same Stack Overflow survey found 66 per cent of developers were frustrated by AI answers that were “almost right”, and 45.2 per cent said debugging AI-generated code was more time-consuming. [Stack Overflow]

- SimpleBench reported an 83.7 per cent human baseline from a small sample of 9 people, while all 13 tested language models scored lower on everyday reasoning tasks. [SimpleBench]

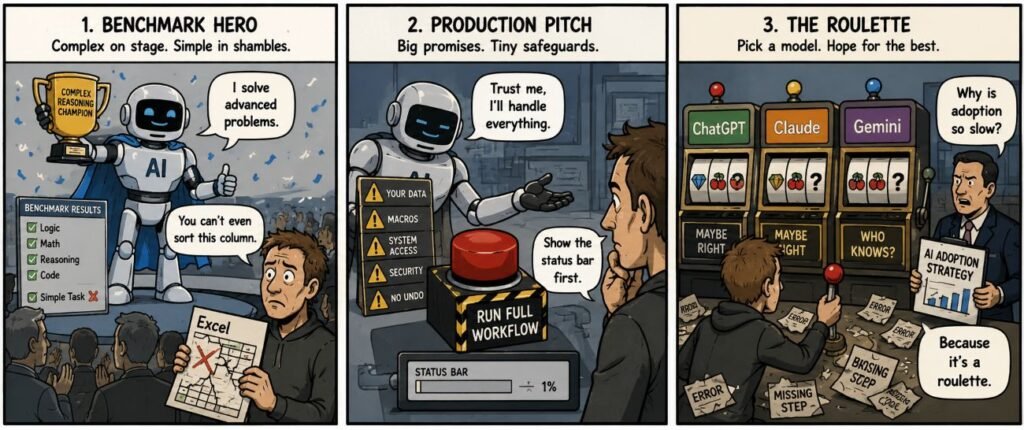

The sketch

Scene 1: The Benchmark Parade

Panel description. A giant robot stands on a stage holding a trophy labelled “Complex Reasoning Champion”. Executives clap from a balcony. A small user at the bottom holds a broken spreadsheet.

Dialogue:

Robot: “I solve advanced problems.”

User: “You can’t even sort this column.”

Scene 2: The Production Pitch

Panel description. The robot offers a button marked “Run Full Workflow”. Behind it are warning signs for data, macros and security. The user looks at a tiny missing progress bar.

Dialogue:

Robot: “Trust me. I’ll handle everything.”

User: “Show the status bar first.”

Scene 3: The Roulette Desk

Panel description. Three slot-machine handles are labelled ChatGPT, Claude and Gemini. The user pulls one while a manager holds a sign saying “AI adoption strategy”.

Dialogue:

Manager: “Why is adoption so slow?”

User: “Because it’s a roulette.”

What to watch, not the show

- Money: companies need adoption to justify model costs, investor pressure and infrastructure spending.

- Incentives: benchmark wins are easier to market than boring reliability in Excel, emails, code reviews and workflows.

- Platform logic: vendors want users locked into ecosystems before the tools are fully dependable.

- Labour: the hidden work is human checking, testing, debugging and fixing after the AI output arrives.

- Safety: weak review of AI-generated code can create security and maintenance problems later.

- Ownership: users must know who is responsible when an AI-written task damages files, data or business operations.

- Long-term risk: if simple mistakes keep happening, users will treat AI as a toy assistant rather than serious infrastructure.

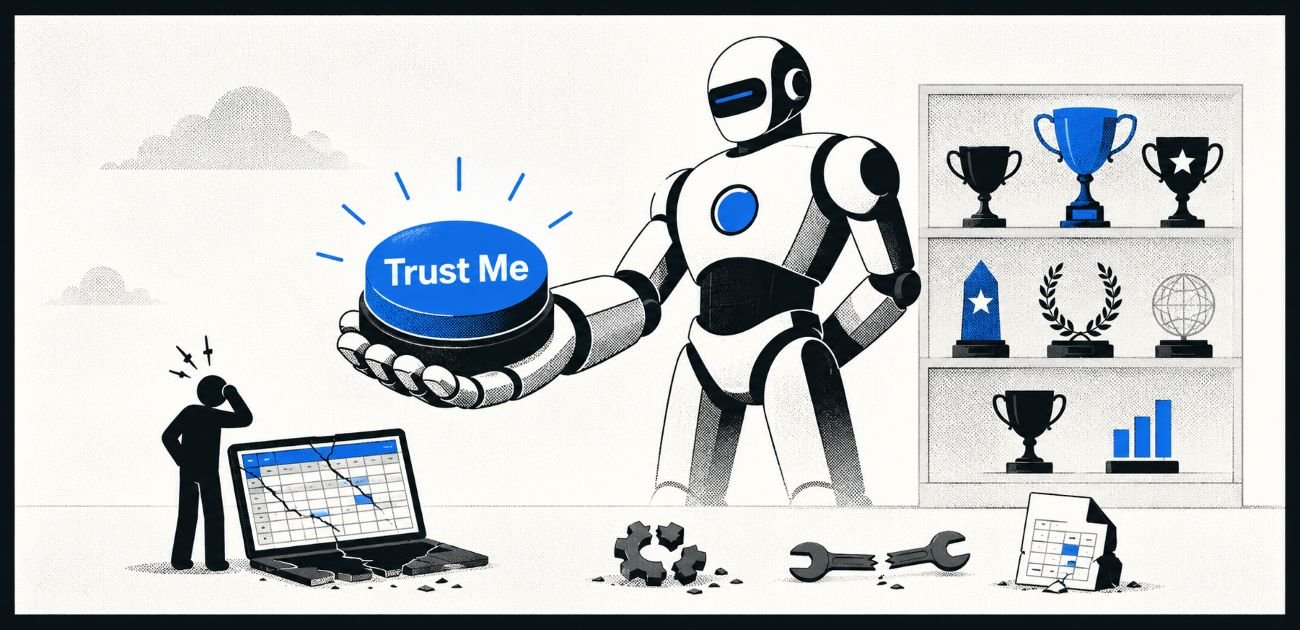

The Hermit take

Trust is not won by saying “AGI” louder.

It is won by doing the small task correctly before touching the big one.

Keep or toss

Keep / Toss.

Keep AI as a sharp assistant for users who can verify the work.

Toss the fantasy that production trust comes from benchmark confetti.

Sources

- Stanford AI Index 2025: https://hai.stanford.edu/ai-index/2025-ai-index-report

- Gartner GenAI project failure article: https://www.gartner.com/en/articles/genai-project-failure

- Stack Overflow 2025 AI survey: https://survey.stackoverflow.co/2025/ai

- Stack Overflow 2025 Developer Survey overview: https://survey.stackoverflow.co/2025

- SimpleBench benchmark: https://simple-bench.com/

- Reuters on agentic AI project cancellations: https://www.reuters.com/business/over-40-agentic-ai-projects-will-be-scrapped-by-2027-gartner-says-2025-06-25/

Leave a Reply