Lede

The world is being sold superintelligence, but delivered a confident intern that sometimes can’t tell nuance from noise.

Hermit Off Script

I use OpenAI’s ChatGPT 5.2 for some tasks and somehow I end up roasting the AI hype almost every time. Not because it’s useless. It’s useful. It’s just a really smart dumb AI. The kind that can write like a genius and then miss the difference and nuance that the whole LLM training story brags about in the first place. And while the leaders of “superintelligence” talk like the robots are about to take all our jobs, my forecast is the opposite of their victory lap: the companies that rush to replace human workers with AI will end up hiring the same people back and apologising for the mess. Because in real work, you don’t get paid for sounding clever, you get paid for being right when things are messy. Sometimes I honestly wonder if we’re even using the same models they use in-house, because what I see in the wild, even with the reasoning pro models, is not the level of reliability the hype is selling. So yes, to all companies out there replacing workers with AI: you’re going to feel sorry. It’s not the time yet. Maybe in a decade, maybe less. Right now it’s still a dumb smart tool, and you don’t build a business by outsourcing judgement to that.

What does not make sense

- If AI is “ready to replace whole departments”, why does it still confidently invent details when the question gets messy?

- If the product is “reasoning”, why do you still need a human to sanity-check basic assumptions?

- If the goal is productivity, why do so many deployments create extra work: verifying, correcting, escalating, apologising?

- If companies “trust the model”, why do they keep adding human override workflows and “talk to an agent” buttons?

- If this is the inevitable future, why does it already look like the past: automation first, rehiring later.

Sense check / The numbers

- The World Economic Forum projected job disruption equal to 22 per cent of today’s jobs by 2030, with 170 million roles created and 92 million displaced, for a net gain of 78 million. [WEF]

- On January 28, 2026, the UK technology secretary said “some jobs will go” and announced a plan to train up to 10 million Britons in basic AI skills by 2030. [Guardian]

- OpenAI’s own help guidance says ChatGPT can be wrong, including fabricating quotes, studies, and citations, and sounding confident while doing it. [OpenAI Help]

- Klarna said its AI chatbot could do the work of 700 representatives, handled 2.3 million chats in its first month, then later shifted to ensure customers can always speak to a human and began recruiting humans again. [CX Dive]

- In January 2026, the IMF head cited research suggesting 60 per cent of jobs in advanced economies will be affected by AI (enhanced, transformed, or eliminated). [Guardian]

The sketch

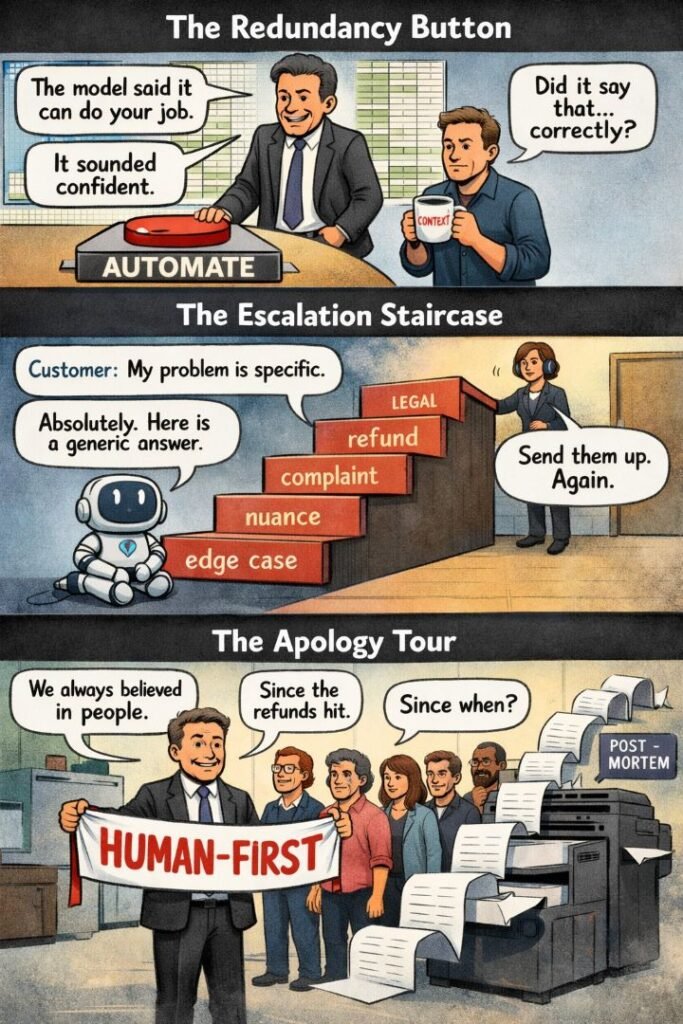

Scene 1: “The Redundancy Button”

Panel: A manager stands over a big button labelled “AUTOMATE”, with a spreadsheet halo. A human worker holds a mug labelled “context”.

Dialogue:

- Manager: “The model said it can do your job.”

- Worker: “Did it say that… correctly?”

- Manager: “It sounded confident.”

Scene 2: “The Escalation Staircase”

Panel: A chatbot sits at the bottom of a staircase. Each step is labelled: “edge case”, “nuance”, “complaint”, “refund”, “legal”. A human is waiting at the top with a headset.

Dialogue:

- Customer: “My problem is specific.”

- Chatbot: “Absolutely. Here is a generic answer.”

- Human: “Send them up. Again.”

Scene 3: “The Apology Tour”

Panel: The same manager now holds a banner reading “HUMAN-FIRST”. Behind them: a queue of rehired staff and a printer spitting “post-mortem” documents.

Dialogue:

- Manager: “We always believed in people.”

- Staff: “Since when?”

- Manager: “Since the refunds hit.”

What to watch, not the show

- Cost-cutting dressed up as innovation, then rebranded as “efficiency”.

- Procurement theatre: buying “AI” as a badge, not a measured capability.

- Metrics that reward speed over correctness, until the wrong answer costs money.

- Missing accountability: nobody owns the damage when the model is “just a tool”.

- Skills debt: fewer juniors hired, then nobody left who understands the work.

- Trust decay: customers learn the company is harder to reach, not better.

The Hermit take

AI should sit next to your workers, not on their graves.

If your plan is “replace humans”, your real plan is “rebuild later, noisily”.

Keep or toss

Keep / Toss

Keep AI for drafts, triage, and pattern-spotting.

Toss the fantasy that it replaces judgement, empathy, and responsibility in 2026.

Sources

- WEF press release summary (jobs created and displaced): https://www.weforum.org/press/2025/01/future-of-jobs-report-2025-78-million-new-job-opportunities-by-2030-but-urgent-upskilling-needed-to-prepare-workforces/

- WEF report section (jobs outlook, 170m/92m/78m, 22%): https://www.weforum.org/publications/the-future-of-jobs-report-2025/in-full/2-jobs-outlook/

- The Guardian (UK tech secretary, 10m training plan): https://www.theguardian.com/technology/2026/jan/28/artificial-intelligence-will-cost-jobs-admits-liz-kendall

- OpenAI Help Center (hallucinations and verification): https://help.openai.com/en/articles/8313428-does-chatgpt-tell-the-truth

- OpenAI (why language models hallucinate): https://openai.com/index/why-language-models-hallucinate/

- CX Dive (Klarna recruits humans again, 700 claim, 2.3m chats): https://www.customerexperiencedive.com/news/klarna-reinvests-human-talent-customer-service-AI-chatbot/747586/

- The Guardian (IMF head, 60 per cent jobs affected claim): https://www.theguardian.com/technology/2026/jan/23/ai-tsunami-labour-market-youth-employment-says-head-of-imf-davos