Lede

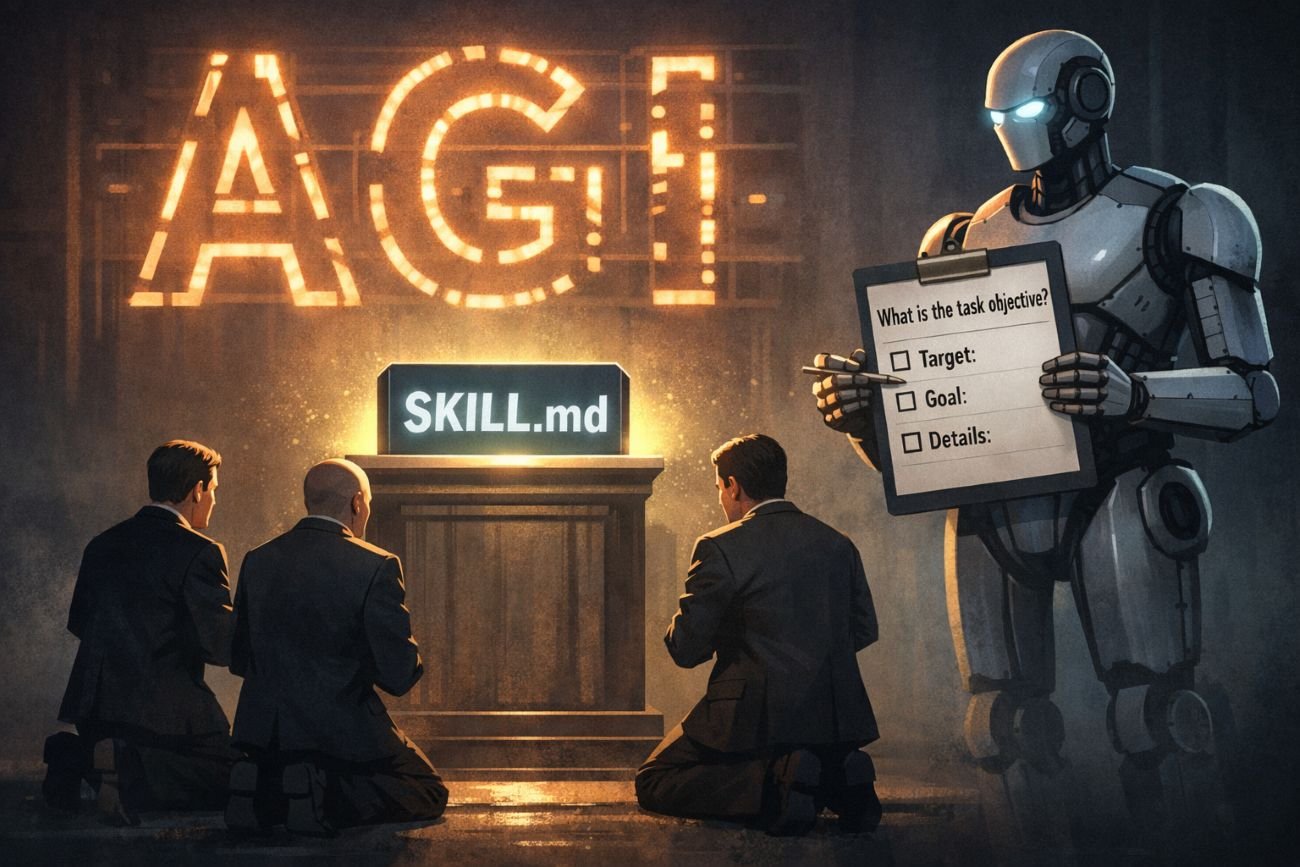

A video published about the rise of agent “skills” accidentally highlights the real joke: we are still writing laminated instructions for machines while being asked to clap for the coming of AGI.

Hermit Off Script

The video from Nate gave me the perfect little sermon for the week, because it exposes the thing that irritates me most in the whole AI circus: the industry keeps dressing up assisted competence as if it were some kind of silicon awakening. I watch people talk about prompt engineering like it is the dawn of a noble craft, when half the time it is just an elaborate attempt to stop a model from confidently walking into the wrong room. If the basic prompt is vague, a genuinely smart system should ask the obvious first question by default: what is the target, what exactly needs to be achieved, and what counts as done. If it does not do that, then all this grand theatre about “skills”, “contracts”, “orchestration” and “agentic workflows” starts looking suspiciously like a filing cabinet in a trench coat. I am not saying these models are useless. Far from it. Sometimes they reach startling levels in narrow domains and make humans look slow, dusty and tragically attached to tabs. But that is exactly why the hype becomes so annoying. The same model that writes elegant code or summarises a jungle of documents can still fumble a plain task unless the instructions are packed like a military ration. OpenAI’s own documentation says reasoning models will often ask clarifying questions before making uneducated guesses, and its GPT-5.4 guidance still leans on explicit output contracts, tool rules and completion criteria. Anthropic, OpenAI and Microsoft are all now documenting skills or MCP-style standards as ways to package instructions, scripts, resources and context more reliably. That is useful engineering. It is not proof of a synthetic mind descending from the heavens in a hoodie. What bothers me is the confusion between memorisation, retrieval, tool access and actual smartness. Humans do this as well. Many people are walking warehouses with good lighting. Ask them to repeat, compare, sort, cite and posture, and they shine. Ask them to create something truly new and too often you get reheated soup in a nicer bowl. So yes, current AI models mirror us rather well: vast memory, variable judgement, occasional brilliance, frequent absurdity. I still think there is a missing ingredient between brain-like architecture and real inner intelligence, whatever name one prefers for it. Call it consciousness, call it soul, call it the atomic heart that has not been built yet. Maybe future hardware shifts the gap. Maybe quantum systems do. Maybe none of that does. But for now I am not interested in incense around a calculator with confidence issues. I will happily use the tool. I just will not kneel before it.

Nate B Jones: Anthropic, OpenAI, and Microsoft Just Agreed on One File Format. It Changes Everything.

What does not make sense

- Selling “autonomy” while shipping more and more structured instruction files, skill manifests and context wrappers to keep the thing on the rails.

- Pretending prompt engineering proves machine intelligence, when its very existence often proves the opposite: the model still needs careful shepherding.

- Talking as if ambiguity has been conquered, while official guidance still spends real effort on when the model should ask, abstain or proceed.

- Confusing huge stores of human-written knowledge with wisdom.

- Dressing interoperability plumbing up as consciousness.

- Treating “works brilliantly sometimes” as identical to “understands deeply”.

Sense check / The numbers

- Anthropic introduced MCP on 25 November 2024 as an open standard for connecting AI systems with external data sources and tools. [Anthropic]

- OpenAI announced Responses API support for remote MCP servers on 21 May 2025, and its current documentation says connectors and remote MCP servers give models new capabilities. [OpenAI]

- Microsoft’s MCP on Windows overview says MCP standardises integrations between AI apps and external tools and data sources, and its Agent Skills documentation describes portable packages of instructions, scripts and resources. [Microsoft]

- OpenAI’s reasoning guide says reasoning models will often ask clarifying questions before making uneducated guesses. [OpenAI]

- OpenAI’s GPT-5.4 guidance says explicit prompting still helps, and smaller models are less likely to infer missing steps or resolve ambiguity unless that behaviour is specified directly. [OpenAI]

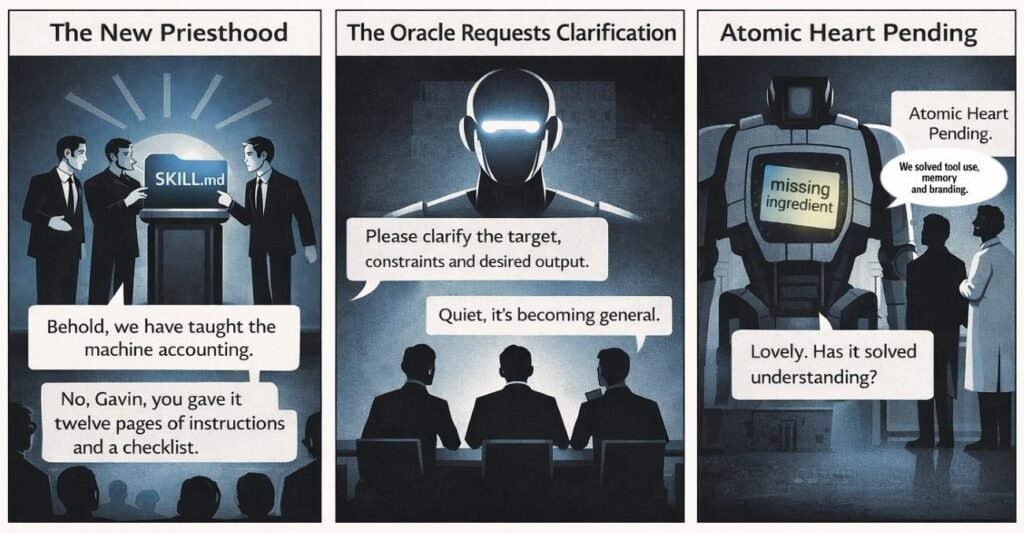

The sketch

Scene 1: The New Priesthood

A conference stage with three prompt engineers in black turtlenecks unveiling a glowing folder called “SKILL.md”.

Bubble 1: “Behold, we have taught the machine accounting.”

Bubble 2: “No, Gavin, you gave it twelve pages of instructions and a checklist.”

Scene 2: The Oracle Requests Clarification

A giant chrome AI head floats above a boardroom table while investors stare in awe. A tiny pop-up appears in front of its face.

Bubble 1: “Please clarify the target, constraints and desired output.”

Bubble 2: “Quiet, it’s becoming general.”

Scene 3: Atomic Heart Pending

A futuristic lab. Scientists stand beside a towering robot chest cavity with nothing inside except a blinking Post-it note reading “missing ingredient”.

Bubble 1: “We solved tool use, memory and branding.”

Bubble 2: “Lovely. Has it solved understanding?”

What to watch, not the show

- Venture money rewarding bigger claims faster than boring reliability work.

- Standards warfare, because MCP, AGENTS.md and SKILL.md matter precisely because the dull plumbing decides who becomes the default ecosystem.

- Enterprise demand for repeatable workflows, not mystical sentience in trainers.

- Labour substitution pressure, where “agentic” often means “do more with fewer people”.

- Benchmark theatre, where bursts of competence are sold as durable judgement.

- The widening gap between marketing language and what the documentation quietly admits.

The Hermit take

Useful tools, absurd sermons.

When the machine still needs a manual to ask the obvious question, don’t call it a mind.

Keep or toss

Keep / Toss

Keep the tooling and the standards.

Toss the halo, the priesthood and the cheap AGI incense.

Sources

- Video inspiration – AI News & Strategy Daily with Nate B. Jones: https://shows.acast.com/ai-news-strategy-daily-with-nate-b-jones/episodes/anthropic-openai-and-microsoft-just-agreed-on-one-file-forma

- YouTube listing for the same video: https://www.youtube.com/watch?v=0cVuMHaYEHE

- Anthropic – Introducing the Model Context Protocol: https://www.anthropic.com/news/model-context-protocol

- Anthropic – Donating the Model Context Protocol and establishing the Agentic AI Foundation: https://www.anthropic.com/news/donating-the-model-context-protocol-and-establishing-of-the-agentic-ai-foundation

- Anthropic – Measuring AI agent autonomy in practice: https://www.anthropic.com/research/measuring-agent-autonomy

- Claude Code docs – Extend Claude with skills: https://code.claude.com/docs/en/skills

- OpenAI API docs – Skills: https://developers.openai.com/api/docs/guides/tools-skills

- OpenAI API docs – MCP and Connectors: https://developers.openai.com/api/docs/guides/tools-connectors-mcp

- OpenAI Developer Community – Introducing support for remote MCP servers: https://community.openai.com/t/introducing-support-for-remote-mcp-servers-image-generation-code-interpreter-and-more-in-the-responses-api/1266973

- OpenAI API docs – Reasoning best practices: https://developers.openai.com/api/docs/guides/reasoning-best-practices

- OpenAI API docs – Prompt guidance for GPT-5.4: https://developers.openai.com/api/docs/guides/prompt-guidance

- OpenAI – Agentic AI Foundation: https://openai.com/index/agentic-ai-foundation/

- Microsoft Learn – MCP on Windows overview: https://learn.microsoft.com/en-us/windows/ai/mcp/overview

- Microsoft Learn – Agent Skills: https://learn.microsoft.com/en-us/agent-framework/agents/skills

- Agent Skills specification: https://agentskills.io/specification

Leave a Reply