Lede

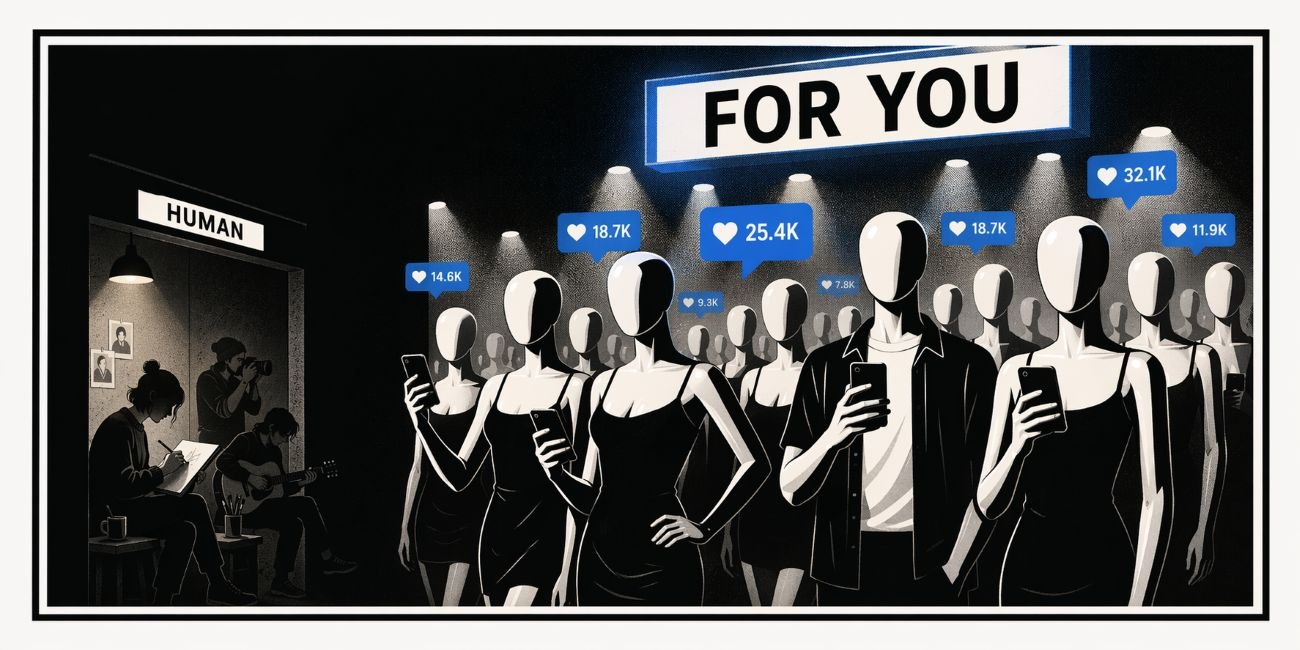

Social media is busy building a future where the audience must bring a magnifying glass just to check whether the person speaking exists.

Hermit Off Script

The rise of AI accounts impersonating real people is not some quirky internet phase to laugh off between adverts and cat videos. It is the beginning of a very stupid masquerade where platforms keep congratulating themselves for innovation while ordinary users are quietly drafted into unpaid detective work. If I were making policy, I would not stop at a tiny label tucked away like a legal rash under the profile name. I would split these accounts into separate channels and keep the main square for actual human beings who still have the decency to be limited by sleep, mood, hunger and the occasional crisis of confidence. Right now the whole thing is heading toward a feed where polished synthetic charm can post all day, copy any style in minutes, and flood every corner of culture until real people decide the game is rigged and stop bothering. In a twisted way that may even wound the platforms themselves, because a network full of fake vitality eventually starts smelling like an empty shopping centre with background music. But for artists, writers, small creators and anyone trying to share something fragile and real, this is not a philosophical problem. It is a practical mugging. Their work can be cloned, improved, repackaged and pushed out at industrial speed by accounts that never get tired and never doubt their own rubbish. Human art will start looking like handmade furniture in a warehouse of flat-pack copies: slower, rarer, dearer, and hopefully still worth more to anyone with a pulse. The danger is not only fakery. It is exhaustion. The machine does not need to beat human truth. It only needs to bury it under volume.

What does not make sense

- Platforms say they care about authenticity while stuffing the same feed with tools that mass-produce believable fake people.

- A tiny label is treated like moral hygiene, even though most users do not inspect profiles like forensic accountants.

- Real creators need years to build trust, while synthetic clones need one prompt, a clean jawline and a posting schedule.

- The companies want human engagement, but they are training users to mistrust faces, voices and even ordinary enthusiasm.

- If a platform can build separate tabs for shopping, reels, stories and live streams, it can build a separate lane for synthetic personas.

- The burden is backwards: users must spot the fake, creators must defend originality, and platforms still collect the traffic.

Sense check / The numbers

- Meta said in 2024 it would label AI-generated images on Facebook, Instagram and Threads when it detects industry-standard indicators, and in March 2026 it said it had removed more than 20 million accounts impersonating large creators during 2025. [Meta]

- TikTok says creators must disclose realistic AI-generated content, and the company said its labelling efforts had tagged over 1.3 billion videos to date. It is also testing controls that let users tune how much AI-generated content they see in their feed. [TikTok]

- YouTube requires creators to disclose content that is meaningfully altered or synthetically generated when it seems realistic, including clips that make a real person appear to say or do something they did not. [YouTube]

- Ofcom said only 34 per cent of adults felt confident recognising AI-generated content online, and the European Commission says the AI Act’s transparency obligations will apply from 2 August 2026. [Ofcom] [EU]

- The UK Commons Library said platform policies vary widely: some rely on self-disclosure, some on automatic labels, and some offer far less explicit guidance. [Commons Library]

The sketch

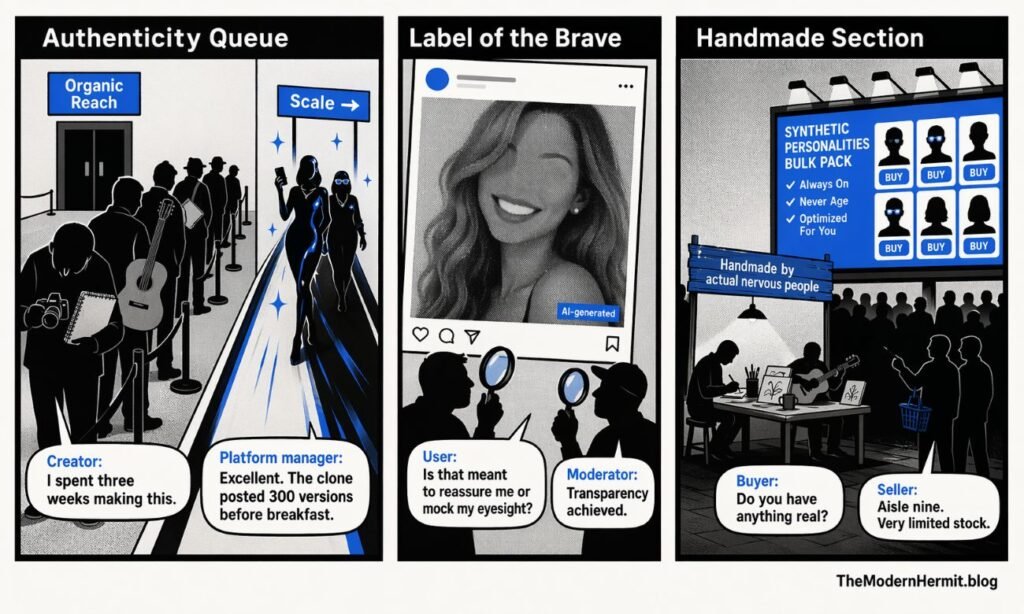

Scene 1: “Authenticity Queue”

Panel description + dialogue: A long queue of tired human creators holds cameras, sketchbooks and guitars outside a door marked “Organic Reach”. Meanwhile glossy AI influencers glide through a fast lane marked “Scale”.

Creator: “I spent three weeks making this.”

Platform manager: “Excellent. The clone posted 300 versions before breakfast.”

Scene 2: “Label of the Brave”

Panel description + dialogue: A giant photorealistic avatar smiles from a billboard while a tiny microscopic label reading “AI-generated” sits in the corner like a guilty breadcrumb. Users squint at it with magnifying glasses.

User: “Is that meant to reassure me or mock my eyesight?”

Moderator: “Transparency achieved.”

Scene 3: “Handmade Section”

Panel description + dialogue: In a future market, human artists sit under a small sign reading “Handmade by actual nervous people” while a stadium-sized screen sells synthetic personalities in bulk.

Buyer: “Do you have anything real?”

Seller: “Aisle nine. Very limited stock.”

What to watch, not the show

- Incentives for volume over truth

- Cheap synthetic labour versus expensive human time

- Weak identity checks and uneven consent rules

- Recommendation systems that reward frequency, not authenticity

- Monetisation models that do not care whether the charisma is grown or generated

- Regulatory lag while product teams sprint ahead

- Creator burnout as the hidden subsidy behind “innovation”

The Hermit take

A feed full of fake people is not a community.

It is a showroom wearing a human face.

Keep or toss

Keep / Toss

Keep AI tools, clear labels and a separate synthetic lane.

Toss impersonation theatre out of general discovery until the line between human and machine is obvious enough for someone without a microscope.

Sources

- Meta – Labeling AI-generated images on Facebook, Instagram and Threads: https://about.fb.com/news/2024/02/labeling-ai-generated-images-on-facebook-instagram-and-threads/

- Meta – Rewarding original creators on Facebook: https://about.fb.com/news/2026/03/rewarding-original-creators-on-facebook/

- TikTok Help – About AI-generated content: https://support.tiktok.com/en/using-tiktok/creating-videos/ai-generated-content

- TikTok Newsroom – More ways to spot, shape and understand AI-generated content: https://newsroom.tiktok.com/more-ways-to-spot-shape-and-understand-ai-content?lang=en

- YouTube Help – Disclosing use of altered or synthetic content: https://support.google.com/youtube/answer/14328491?hl=en

- Ofcom – Ofcom’s strategic approach to AI: https://www.ofcom.org.uk/about-ofcom/annual-reports-and-plans/ofcoms-strategic-approach-to-ai

- European Commission – Consultation on transparent AI systems: https://digital-strategy.ec.europa.eu/en/news/commission-launches-consultation-develop-guidelines-and-code-practice-transparent-ai-systems

- UK Commons Library – AI content labelling briefing: https://researchbriefings.files.parliament.uk/documents/CBP-10467/CBP-10467.pdf